I am an Associate Professor at the College of Computing and Data Science, Nanyang Technological University, Singapore. My research interests lie broadly in Multi-modal Learning, Data-centric AI, Machine Learning, and Computational Narrative Intelligence.

Some notable works of mine in multimodal learning include InstructBLIP, Plug-and-Play VQA, and VisualGPT. My work in Data-centric AI covers the understanding of datasets (factor analysis of datasets), data influence to model decisions (HyDRA), selection of training data (COINCIDE), and synthesis of training data from generative AI (SPARCL).

Another work direction is to build AI that understands commonsense causal relations between events. Examples include "heavy sweating causes thirst" and "hearing compliments makes people happy". Such causal conditions are neither necessary nor sufficient, as they are context-dependent. However, they are indispensable to a world model that enables AI to interpret the past and to plan for the future. Recent representative work includes benchmarks (Causal2Needle, BlackSwanSuite and M-SyMoN) and a technique to extract such knowledge from LLMs, though my work in this direction traces back to my PhD work on learning event graphs.

Prior to NTU, I was a Senior Research Scientist at Baidu Research USA, and a Research Scientist and Group Leader at Disney Research. I received my Ph.D. degree from Georgia Institute of Technology.

I teach a PhD-level Deep Learning course, CE7454, which constantly receives student scores of 90/100 or above. You can find one slide deck here.

gs.ude.utn@il.gnayob :liamE

Selected Papers

Haoxin Li, Yingchen Yu, Qilong Wu, Hanwang Zhang, Song Bai, and Boyang Li. Learning to Animate Images from A Few Videos to Portray Delicate Human Actions. Winter Conference on Applications of Computer Vision (WACV). 2026.

TL;DR: We create videos from a given first frame and outperform many commercial video generation techniques on delicate actions.

Paper Project Page bibtexHaoxin Li and Boyang Li. Enhancing Vision-Language Compositional Understanding with Multimodal Synthetic Data. The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 2025.

TL;DR: Efficient and controllable synthesis of multimodal training data, which improves compositional understanding of CLIP.

Paper Code bibtexAditya Chinchure, Sahithya Ravi, Raymond T. Ng, Vered Shwartz, Boyang Li, and Leonid Sigal. Black Swan: Abductive and Defeasible Video Reasoning in Unexpected Events. The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 2025.

TL;DR: A vision-language benchmark for abductive reasoning (inferring cause from observation) and defeasible reasoning (correcting old beliefs with new evidence) using short video stories containing surprising events.

Paper Data Project Page bibtexTong Zhang, Mengao Zhang, Wei Yan Low, X. Jessie Yang, and Boyang Li. Conversational Explanations: Discussing Explainable AI with Non-AI Experts. The ACM Conference on Intelligent User Interfaces (IUI). 2025.

TL;DR: We build a conversational agent that explains four popular explanation techniques for image classifers using synthetic data.

Paper bibtexTong Zhang, X. Jesse Yang, and Boyang Li. May I Ask a Follow-up Question? Understanding the Benefits of Conversations in Neural Network Explainability. International Journal of Human-Computer Interaction. 2024.

TL;DR: We empirically show that conversational explanations of machine learning, which allows users to ask follow-up questions to the explanations, is more effective than static, one-shot explanations.

Paper bibtexJaewoo Lee, Boyang Li, and Sung Ju Hwang. Concept-skill Transferability-based Data Selection for Large Vision-Language Models. EMNLP 2024.

TL;DR: We prune vision-language instruction tuning data by (1) identifying data clusters that represent semantic concepts and skills, and (2) selecting representative data from clusters that transfer well to other clusters.

Paper Code bibtexAnthony Tiong, Junqi Zhao, Boyang Li, Junnan Li, Steven Hoi, and Caiming Xiong. What Are We Measuring When We Evaluate Large Vision-Language Models? An Analysis of Latent Factors and Biases. NAACL 2024.

TL;DR: We identify the capabilities that vision-language datasets actually evaluate using Factor Analysis, a data-driven approach for separating intellectual capabilities. We also identify a length bias in evaluation and release a new evaluation dataset, OLIVE.

Paper Dataset bibtexYidan Sun, Qin Chao, and Boyang Li. Event Causality Is Key to Computational Story Understanding. The 2024 Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL). (Oral Presentation) 2024.

TL;DR: We demonstrate the value of LLM-extracted causal relations between events in story understanding tasks.

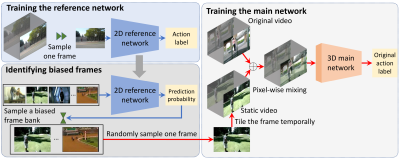

Paper Code bibtexHaoxin Li, Yuan Liu, Hanwang Zhang, and Boyang Li. Mitigating and Evaluating Static Bias of Action Representations in the Background and the Foreground. International Conference on Computer Vision (ICCV) (Oral Presentation). 2023.

TL;DR: Video classifiers often rely on static features to make biased predictions. We show that static bias is caused by not only the background, but also the foreground, such as human actor's outfit or instrument. We propose a theory-inspired method to mitigate bias without having to locate the source.

Paper Supplemental Code bibtexWenliang Dai, Junnan Li, Dongxu Li, Anthony Meng Huat Tiong, Junqi Zhao, Weisheng Wang, Boyang Li, Pascale Fung, and Steven Hoi. InstructBLIP: Towards General-purpose Vision-Language Models with Instruction Tuning. NeurIPS. 2023.

TL;DR: An instruction-tuned vision-language model that achieves state-of-the-art performance on several benchmarks.

Paper Code Model Zoo bibtexYidan Sun, Qin Chao, Yangfeng Ji, and Boyang Li. Synopses of Movie Narratives: a Video-Language Dataset for Story Understanding. ArXiv Preprint 2203.05711. 2022.

TL;DR: A large, plot-level, multimodal dataset for story understanding. The storytelling techniques of the paper create unique challenges for the current generation of multimodal networks.

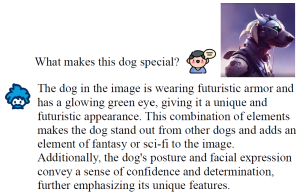

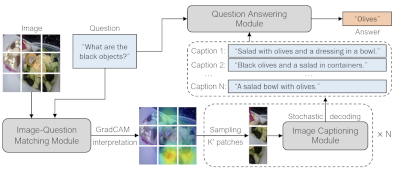

Paper Data bibtexAnthony Meng Huat Tiong, Junnan Li, Boyang Li, Silvio Savarese, and Steven C.H. Hoi. Plug-and-Play VQA: Zero-shot VQA by Conjoining Large Pretrained Models with Zero Training. Findings of the Conference on Empirical Methods in Natural Language Processing (Findings of EMNLP). 2022.

TL;DR: An unexpected modular approach to visual question answering. We translate relevant portions of an image to text and rely on text-based reasoning entirely. On VQAv2, the results are better than Deepmind's Flamingo by 8.5%. This is in contrast to conventional wisdom that end-to-end learning is necessary for good performance.

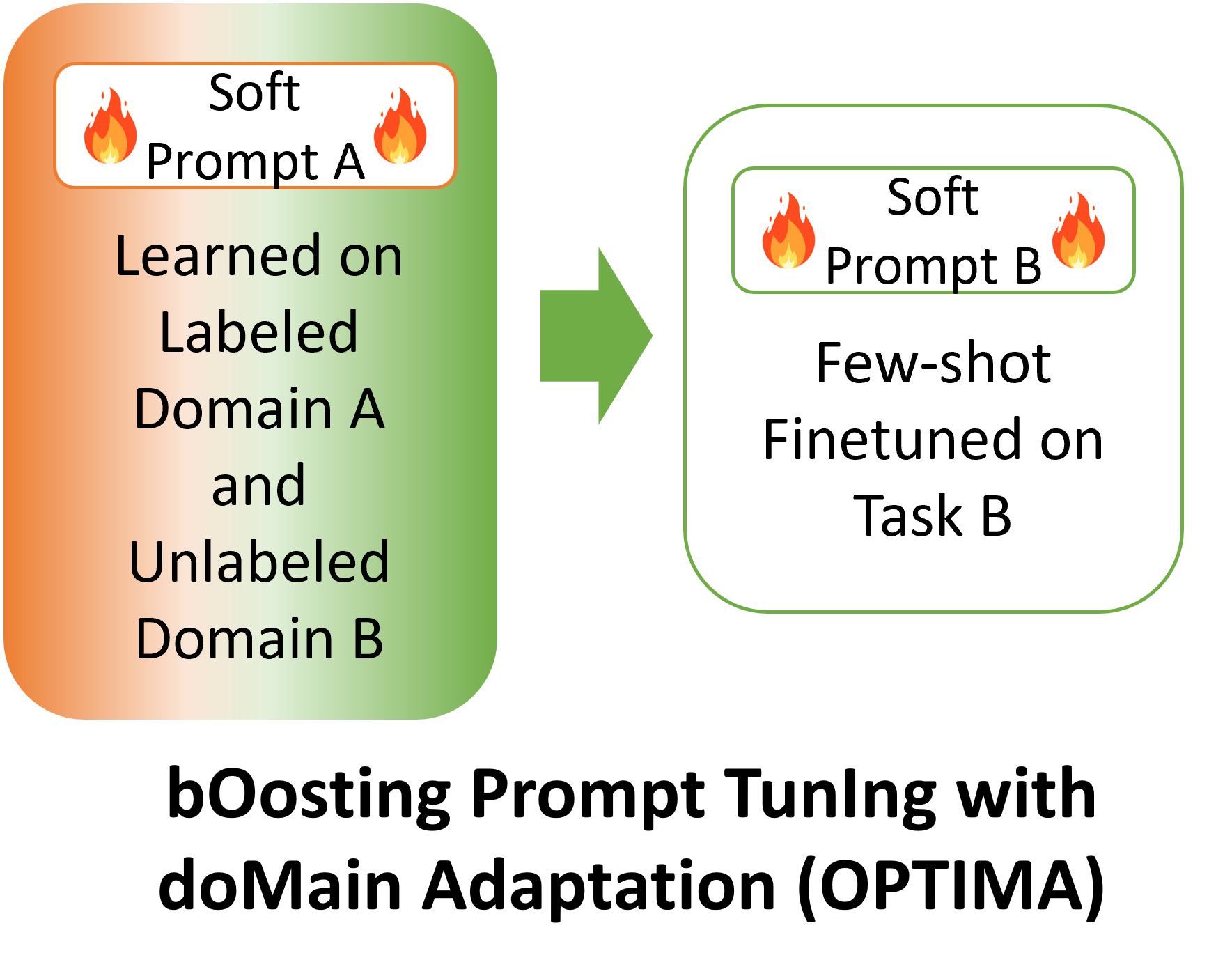

Paper Video Code bibtexXu Guo, Boyang Li, and Han Yu. Improving the Sample Efficiency of Prompt Tuning with Domain Adaptation. Findings of the Conference on Empirical Methods in Natural Language Processing (Findings of EMNLP). 2022.

TL;DR: Prompt tuning is great for large model deployment but requires a lot of training data. To our knowledge, we are the first in using unlabeled data in the target domain to improve prompt tuning.

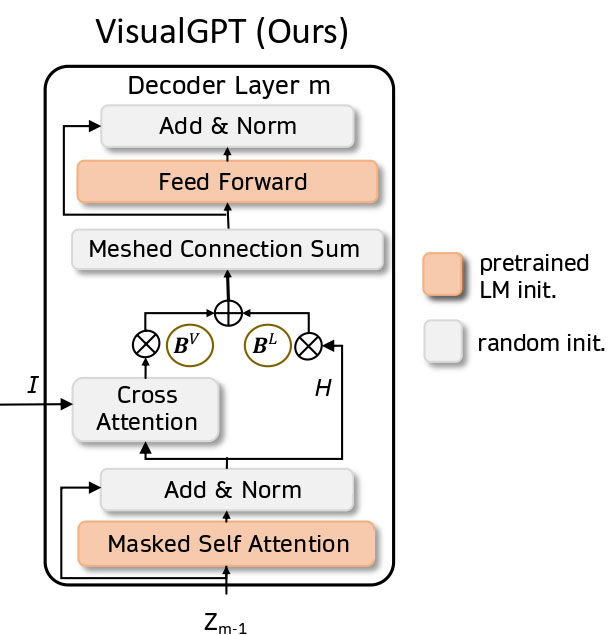

Paper Code bibtexJun Chen, Han Guo, Kai Yi, Boyang Li, and Mohamed Elhoseiny. VisualGPT: Data-efficient Adaptation of Pretrained Language Models for Image Captioning. The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 2022.

TL;DR: We developed a data-efficient method to adapt large-scale pretrained language models for image captioning and achieved SOTA results on X-ray image captioning.

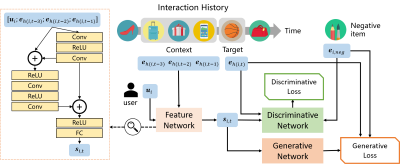

Paper Code bibtexYinan Zhang, Boyang Li, Yong Liu, Hao Wang, Chunyan Miao. Initialization Matters: Regularizing Manifold-informed Initialization for Neural Recommendation Systems. ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD). 2021.

TL;DR: If neural recommenders performed poorly (e.g., worse than well-tuned k-nearest-neighbors), it is probably because they did not use this data-dependent, manifold-informed initialization.

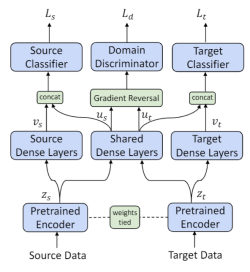

Paper Video Code bibtexXu Guo, Boyang Li, Han Yu, and Chunyan Miao. Latent-Optimized Adversarial Neural Transfer for Sarcasm Detection. The Annual Conference of the North American Chapter of the Association for Computational Linguistics (NAACL-HLT). 2021.

TL;DR: Sarcasm detection is an ideal problem for transfer learning. We identify the competition between losses in adversarial transfer learning and propose a modified optimization technique to solve the problem, which achieves the SOTA result on the iSarcasm dataset.

Paper Code bibtexChang Liu, Han Yu, Boyang Li, Zhiqi Shen, Zhanning Gao, Peiran Ren, Xuansong Xie, Lizhen Cui, and Chunyan Miao. Noise-resistant Deep Metric Learning with Ranking-based Instance Selection. The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). 2021.

TL;DR: We introduce a simple, efficient, and (we believe) the first technique for deep metric learning under noisy training data; the method outperforms 12 baseline methods under both synthetic and natural noise.

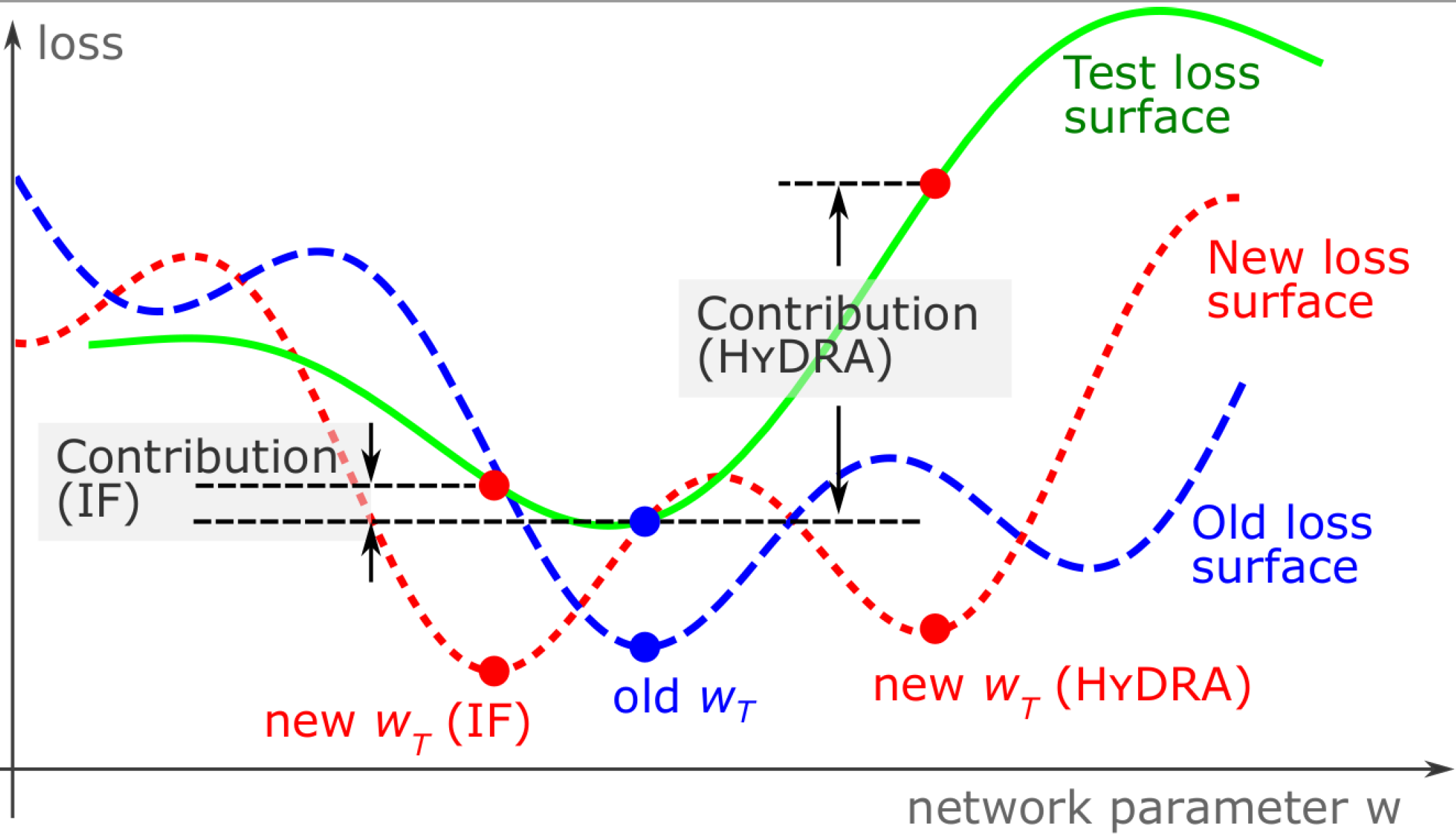

Paper Supplemental Video 视频 Code bibtexYuanyuan Chen, Boyang Li, Han Yu, Pengcheng Wu, and Chunyan Miao. HyDRA: Hypergradient Data Relevance Analysis for Interpreting Deep Neural Networks. The AAAI Conference on Artificial Intelligence (AAAI). 2021.

TL;DR: We provide an approximate hypergradient method for estimating how training data contribute to individual network predictions and a theoretical bound on the approximation error.

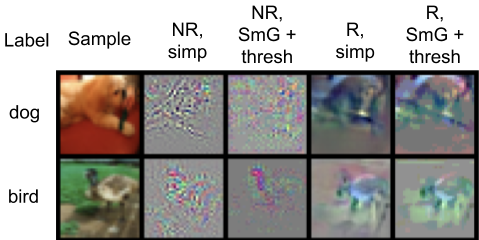

Paper Supplemental Code bibtexAdam Noack, Isaac Ahern, Dejing Dou, and Boyang Li. An Empirical Study on the Relation between Network Interpretability and Adversarial Robustness Springer Nature Computer Science. 2020.

TL;DR: Does the interpretability of neural networks imply robustness against adversarial attack? We provide some positive empirical evidence.

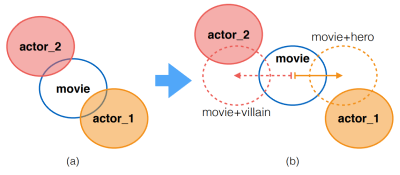

Paper Code bibtexHannah Kim, Denys Katerenchuk, Daniel Billet, Jun Huan, Haesun Park, and Boyang Li. Understanding Actors and Evaluating Personae with Gaussian Embeddings. The AAAI Conference on Artificial Intelligence (AAAI). 2019.

TL;DR: We computationally model movie casting decisions and actors' versatility.

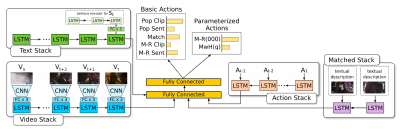

Paper Code & Data bibtexPelin Dogan, Boyang Li, Leonid Sigal, Markus Gross. A Neural Multi-sequence Alignment TeCHnique (NeuMATCH). The Conference on Computer Vision and Pattern Recognition (CVPR). 2018.

TL;DR: We propose the first end-to-end optimizable network for aligning video and text sequences.

Paper Data bibtexNg Annalyn, Maarten Bos, Leonid Sigal, Boyang Li. Predicting Personality from Book Preferences with User-Generated Content Labels. IEEE Transaction on Affective Computing. 2018.

TL;DR: We can infer your personality from the books you read.

Paper bibtexHiring

I have multiple open positions for Ph.D. students, postdocs, and research engineers. Please send me your CV.

What's New

- Sep 2025: 2 papers accepted to NeurIPS 2025.

- May 2025: 1 paper accepted to ICML 2025.

- Mar 2025: 2 papers accepted to CVPR 2025.

- Feb 2025: I will serve as the General Chair of the Singapore Symposium on Natural Language Processing 2025.

- Sep 2024: 4 EMNLP acceptance (1 Main, 3 Findings).

- Aug 2024: I was tenured.

- Aug 2024: I received a Young Faculty Research Award (Special Mention) from the College of Engineering, NTU.

- Jul 2024: 1 paper accepted to the Conference on Language Modeling (COLM).

- Jun 2024: 1 paper accepted to the International Journal of Human-Computer Interaction.

- Mar 2024: 2 papers accepted to NAACL 2024.

- Jan/Feb 2024: 1 AAAI paper and 1 CVPR paper accepted.

- Dec 2023: I presented recent work as an invited speaker at the Workshop on Effective Multimodal Perception and Interactive Learning, at the 6th Asia Conference on Cognitive Engineering and Intelligent Interaction.

- Nov 2023: I presented recent work as an invited speaker at the Workshop on Large Generative Models Meet Multimodal Applications, ACM Multimedia.

- Aug 2023: 1 paper accepted to ACM Computing Surveys.

- Jul 2023: 1 paper accepted to ICCV as Oral presentation (~1% of submissions) and 1 paper accepted to ACM MM.

- Jun 2023: Presented recent work at the University of British Columbia, Canada. [Slides]

- Jun 2023: Attended CVPR in Vancover, Canada.

- Apr 2023: Visiting University of Malaya at Kuala Lumpur, Malaysia.

- Mar 2023: One paper on Visual Question Answering accepted to CVPR 2023. One paper accepted to ICME 2023.

- Feb 2023: I presented recent work at Tsinghua University and Shandong University.

- Jan 2023: I served as a Senior Action Editor for ACL Rolling Review.

- Dec 2022: I was at EMNLP 2022 in Abu Dhabi, UAE.

- Oct 2022: Three papers accepted to EMNLP Findings 2022.

- Mar 2021: Received the NRF Fellowship award with funding of 2.5M SGD.